How to Reduce Accelerated Underwriting Override Rates

Override rates quietly erode the ROI of accelerated underwriting programs. Here's what drives manual escalation and how carriers are bringing those numbers down.

Every carrier running an accelerated underwriting program has the same quiet problem. The program looks good on paper—faster cycle times, lower per-policy costs, happier applicants. But then you look at how many cases that enter the accelerated path actually stay on it. The override rate tells a different story.

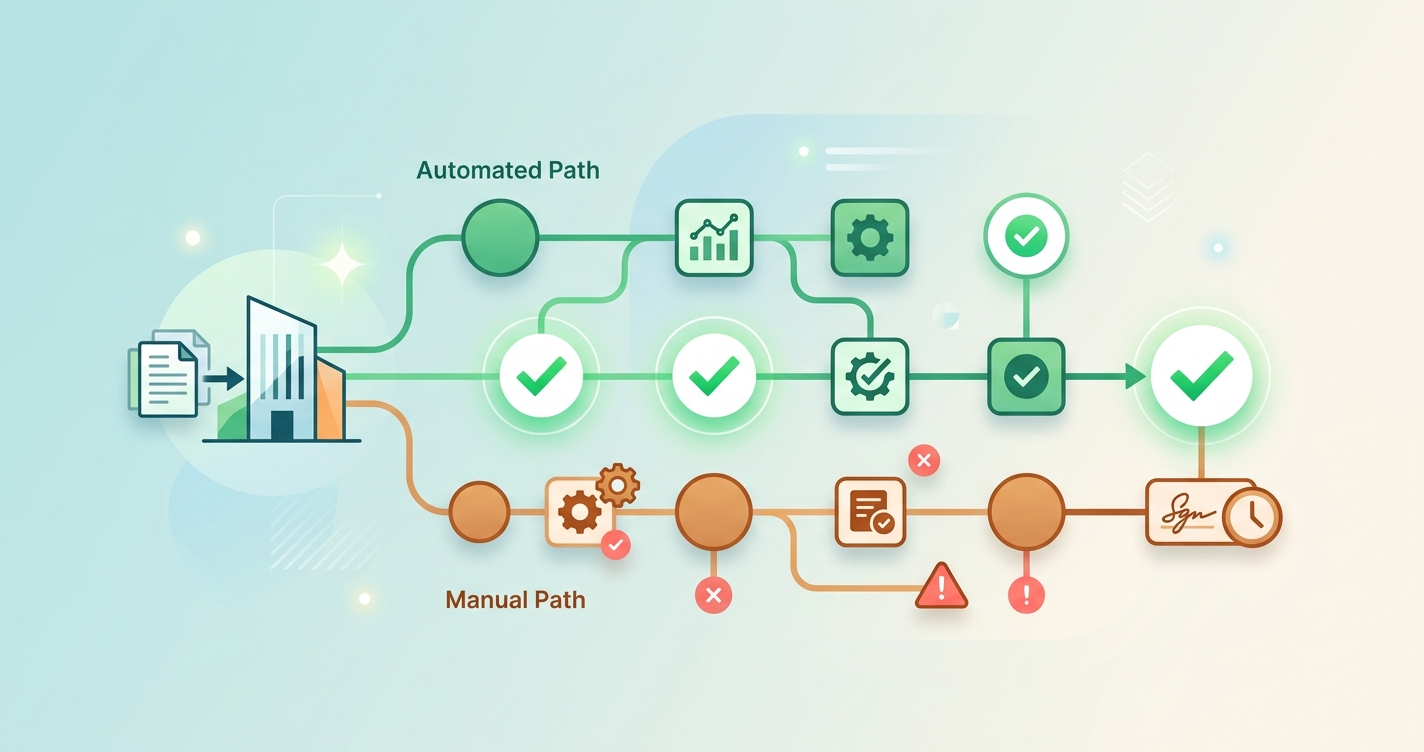

An override happens when a case that was supposed to be decided algorithmically gets escalated to manual review. The underwriter steps in, applies judgment, and the case loses most of the speed and cost advantages that the accelerated path was built to deliver. Too many overrides and the program is essentially a traditional underwriting shop with extra steps bolted onto the front end.

PartnerRe's 2024 survey of U.S. carriers found that acceleration rates (the percentage of applicants who actually complete the accelerated path without manual intervention) ranged from 3% to 63% across respondents, with an average of 25%. That spread tells you everything about how differently carriers manage this problem.

According to PartnerRe's 2024 Accelerated Underwriting Market Survey, the average U.S. carrier accelerates just 25% of eligible applicants through the full automated path. The rest fall out to manual review, taking most of the program's economic benefit with them.

What actually drives high override rates in accelerated underwriting

Override rates don't come from one broken thing. They accumulate from dozens of small design decisions that each add a few percentage points of fallout. Understanding which levers matter most is where the real work starts.

Eligibility thresholds that are too tight

The most common source of overrides is eligibility rules that were set conservatively at launch and never updated. Most carriers build their initial accelerated programs with narrow bands—age under 45, face amount under $500,000, no prescription flags in the last two years. Those thresholds reflect launch-day risk appetite, not what the data has since shown.

RGA's research on accelerated underwriting program evolution found that carriers who periodically revisit and expand their eligibility criteria based on mortality experience data see acceleration rates improve by 10 to 15 percentage points over three years, without measurable deterioration in actual-to-expected mortality ratios. (We covered how to set these thresholds in our analysis of biometric eligibility criteria.)

Data source gaps and conflicts

When an applicant's prescription history says one thing and their MIB record says another, the algorithm doesn't know which to trust. So it escalates. These conflict-driven overrides are particularly frustrating because they often resolve quickly once a human looks at them—the discrepancy turns out to be a coding error, a name match issue, or outdated information in one source.

The Society of Actuaries' 2020 research on simplified issue underwriting documented that data quality issues account for roughly 20% of cases that fall out of automated decision paths. Not risk issues. Data issues.

Model confidence thresholds set for launch, not maturity

Predictive models in accelerated programs typically output a risk score along with a confidence level. When the model is less certain about a score, the case gets kicked to manual review. The problem is that confidence thresholds are usually calibrated during initial deployment when the model has limited production data. As the model sees more cases and performance data accumulates, those thresholds often remain unchanged.

Munich Re's automation solutions team reported that carriers who recalibrate their model confidence thresholds annually see straight-through processing (STP) rates improve by 8 to 12 percentage points within the first two recalibration cycles.

Comparing override reduction approaches

Not every method of reducing overrides carries the same risk profile or implementation cost. The table below breaks down the main approaches carriers are using.

| Approach | Override reduction potential | Implementation effort | Risk to mortality experience | Time to impact |

|---|---|---|---|---|

| Expand eligibility bands based on experience data | 10–15 percentage points | Low (rule changes) | Low if data-backed | 1–3 months |

| Add supplementary data sources (EHR, clinical) | 8–12 percentage points | Medium (vendor integration) | Low (more data = better decisions) | 6–12 months |

| Recalibrate model confidence thresholds | 8–12 percentage points | Low (model tuning) | Medium (requires monitoring) | 1–2 months |

| Implement tiered escalation (partial auto-decisions) | 5–10 percentage points | Medium (workflow redesign) | Low | 3–6 months |

| Fix data conflict resolution logic | 5–8 percentage points | Medium (rules engine work) | Very low | 2–4 months |

| Reduce manual override authority for borderline cases | 3–5 percentage points | Low (policy change) | Medium (cultural resistance) | Immediate |

| Deploy real-time biometric data (rPPG, vitals) | 10–20 percentage points | High (new data pipeline) | Low (additive signal) | 6–18 months |

The carriers seeing the best results are combining multiple approaches. Expanding eligibility while simultaneously fixing data conflict logic and recalibrating confidence thresholds can compound improvements in ways that none of those changes would deliver alone.

Where real-time biometric data fits

Replacing missing data with present-moment measurement

One of the structural problems with current accelerated underwriting programs is that they rely on historical records—prescriptions filled, diagnoses coded, lab results from some prior doctor visit. Historical data is inherently incomplete. People change doctors, move states, pay cash for prescriptions. Every gap in the historical record is a potential override trigger.

Real-time biometric screening flips this. Instead of assembling a mosaic of stale records and hoping they're complete, the applicant provides a current physiological snapshot. Camera-based remote photoplethysmography (rPPG) can capture heart rate, heart rate variability, respiratory rate, and blood pressure estimates from a smartphone camera in under 60 seconds.

Research from Professor Daniel McDuff, formerly at Microsoft Research and now at Google, has demonstrated that rPPG-based vital sign measurement from standard RGB cameras achieves correlation coefficients above 0.95 with contact-based sensors for heart rate measurement under controlled conditions.

Filling the confidence gap

The most immediate impact of real-time biometric data on override rates comes from cases where the algorithm is uncertain. When an applicant's prescription history raises a question about cardiovascular health but there's no recent clinical data to confirm or deny it, that's an override. If the same applicant provides a 30-second facial scan showing normal resting heart rate and blood pressure, the algorithm has a second signal to weigh against the prescription flag.

This doesn't replace medical judgment. It gives the model another input, and that additional input can push marginal cases above the confidence threshold without human intervention.

What the numbers suggest

Carriers piloting biometric screening in their accelerated programs report that the additional data point resolves 30 to 40% of cases that would otherwise escalate to manual review due to insufficient health data. The screening doesn't change the risk decision. It provides enough information for the existing model to make the decision automatically.

Operational changes that matter as much as technology

Tiered escalation instead of binary auto/manual

Most accelerated programs have two paths: fully automated decision or full manual underwriting review. There's nothing in between. A tiered approach introduces intermediate steps—automated decision with underwriter notification, automated decision with delayed quality review, or partial acceleration where certain aspects are auto-decided while a specific concern gets targeted review.

Appian's research on accelerated underwriting workflows found that carriers implementing tiered escalation structures reduced their full-manual override rates by 15 to 25%, because many cases that get kicked to manual review only need a human to look at one specific aspect, not re-underwrite the entire application.

Underwriter override authority and feedback loops

Here's an uncomfortable fact about override rates: some percentage of them come from underwriters exercising manual authority to override algorithmic decisions they disagree with, even when the algorithm's decision falls within approved guidelines. This isn't negligence. It's professional judgment. But when it happens systematically without feedback into the model, it creates a permanent floor under your override rate.

The NAIC's Accelerated Underwriting Working Group noted in their 2022 regulatory guidance that carriers should track and analyze patterns in manual overrides to distinguish between legitimate risk concerns and preference-based overrides that don't correspond to different mortality outcomes.

Building a structured feedback loop where manual override data gets reviewed monthly and fed back into model training is one of the lowest-cost, highest-impact changes a carrier can make.

Data source conflict resolution

Rather than escalating every data conflict to manual review, sophisticated programs build resolution logic. If the MIB record shows a flag from 2018 but the applicant's electronic health records show clean visits through 2025, the resolution logic can weight the more recent and more detailed source. If prescription data shows a medication that contradicts the applicant's self-reported health status, the system can check whether the medication has off-label uses that are benign from an underwriting perspective.

The Equifax accelerated underwriting framework recommends that carriers map each data conflict scenario to a specific resolution pathway rather than defaulting everything to manual review.

Current research and evidence

The SOA's ongoing mortality experience studies for accelerated underwriting programs are beginning to produce multi-year data. Their 2020 simplified issue underwriting research found that programs with well-designed automated decision paths showed no statistically significant difference in mortality experience compared to traditionally underwritten cohorts in the same age and face amount bands.

PartnerRe's 2024 market survey remains the most comprehensive snapshot of where U.S. carriers actually stand. The finding that acceleration rates average 25% suggests that the industry is still early in optimizing these programs. Carriers at the top of the range (above 50% acceleration) tend to be those that have been running programs for five or more years and have iterated multiple times on their eligibility rules and model calibration.

The NAIC's Accelerated Underwriting Working Group continues to develop regulatory frameworks that balance innovation with consumer protection. Their work on algorithmic fairness and disparate impact testing is particularly relevant for carriers expanding eligibility criteria, since widening the aperture of who qualifies for acceleration must be done without introducing discriminatory outcomes.

The future of override rate management

The carriers that will get override rates below 10% in the next three to five years will be those that treat override reduction as a continuous optimization problem, not a one-time program design exercise. That means quarterly reviews of override patterns, annual model recalibration, ongoing expansion of data sources, and a willingness to let the data overrule institutional inertia.

Real-time biometric data is the next frontier here. Every additional signal that enters the decision model at the point of application reduces the probability that the model won't have enough information to decide. Companies like Circadify are building camera-based vital sign capture that can provide that real-time physiological data directly within the application flow—no devices, no lab visits, no waiting.

The goal isn't to eliminate human judgment from underwriting. It's to make sure human judgment gets applied where it actually adds value, not where it's compensating for incomplete data or overly conservative algorithms.

Frequently asked questions

What is an accelerated underwriting override rate?

The override rate measures the percentage of cases that enter an accelerated underwriting path but get escalated to manual review before a decision is issued. A high override rate means the program isn't delivering on its promise of faster, cheaper decisions. Industry averages hover around 75% fallout (only 25% of cases complete the automated path), though top-performing carriers achieve fallout rates below 50%.

What is a good target for override rate reduction?

There's no universal benchmark because programs vary so much in scope. A carrier accelerating only preferred-plus applicants under age 40 should expect very low override rates—under 10%. A carrier with broader eligibility running through age 60 and higher face amounts might target 30 to 40% override rates. The right target depends on your risk appetite, your data sources, and how long your program has been running.

How does real-time biometric data reduce overrides?

Real-time biometric screening provides current physiological data (heart rate, blood pressure, respiratory rate) at the point of application. This fills information gaps that would otherwise force the algorithm to escalate to manual review. When the model has more complete data, it can make more decisions automatically without sacrificing accuracy.

How often should carriers recalibrate their accelerated underwriting models?

Annual recalibration is the minimum. Carriers with high override rates should consider semi-annual reviews of model confidence thresholds and quarterly reviews of override patterns. The goal is to identify systematic override causes and address them through rule changes or model updates, rather than accepting the override rate as fixed.