What Is a Digital Health Waterfall? Ordering Data Sources for Speed and Cost

A digital health waterfall determines which underwriting data sources get pulled first. The order matters more than most carriers realize for speed and cost.

The digital health waterfall is the sequence in which an underwriting system pulls data on an insurance applicant. It sounds like plumbing, and in some ways it is. But the order you pull data sources determines how fast you can issue a policy, how much each decision costs, and whether your accelerated underwriting program actually accelerates anything.

Most carriers inherited their evidence ordering from a time when everything was manual. Application comes in, order the APS, wait six weeks, maybe schedule a paramedical exam. The "waterfall" was really just a to-do list that moved at whatever speed the vendors allowed. Digital health data has changed what's possible here, but plenty of programs still run their data sources in an order that made sense in 2015 and makes less sense now.

Munich Re's EHR retro study found that positioning electronic health record data as the first piece of evidence ordered, combined with application disclosures, MIB activity, and MVR checks, materially improved the accuracy of accelerated underwriting decisions while reducing the need for downstream evidence pulls. The ordering itself was the intervention.

How a digital health waterfall works

A waterfall in underwriting is a sequential, conditional data-pull architecture. You start with the cheapest and fastest data sources. If those sources give you enough signal to make a decision, you stop. If they don't, you cascade down to the next tier of evidence, which is typically slower and more expensive. The whole point is to avoid pulling data you don't need.

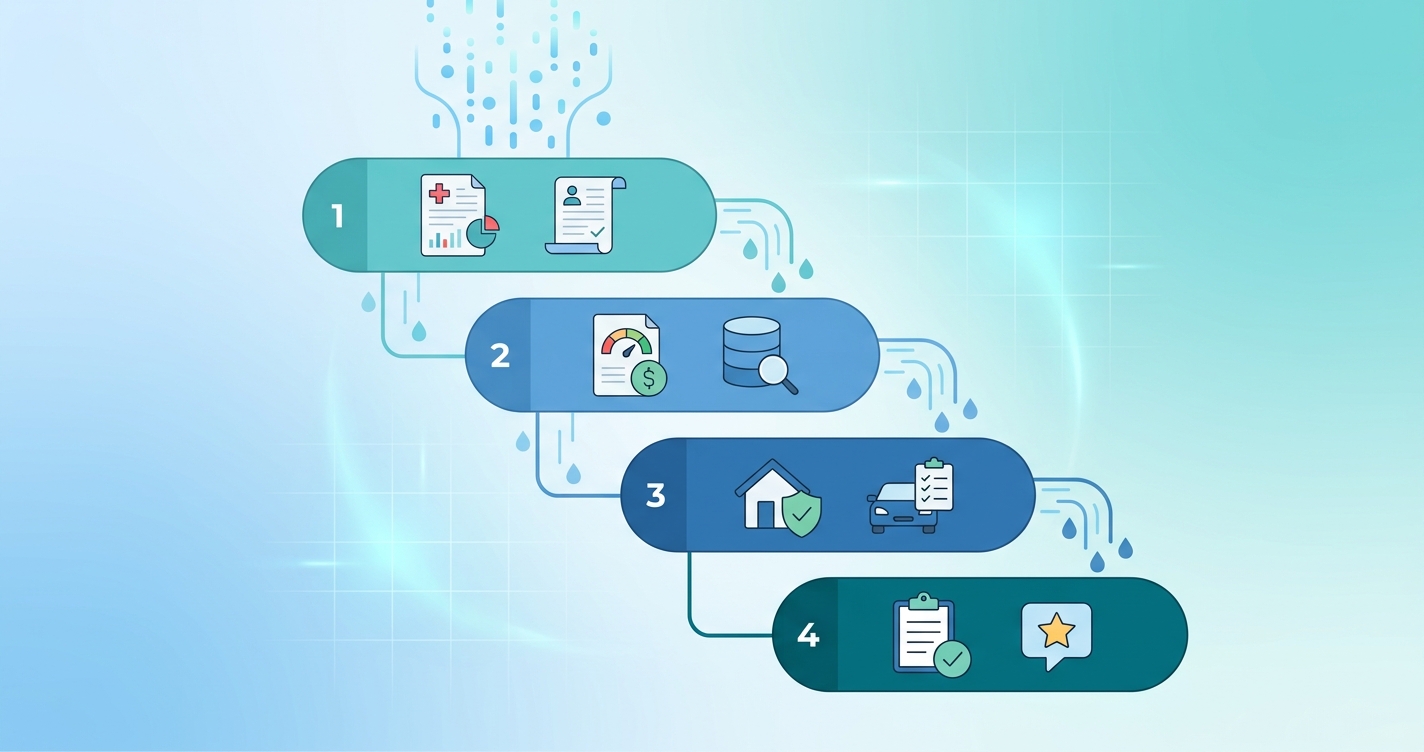

In practice, a modern digital health waterfall might look like this:

Tier 1 — Instant, low-cost: Application data, MIB check, prescription history (Rx), motor vehicle record (MVR), identity verification. These come back in seconds and cost a few dollars each. Most carriers already run these first.

Tier 2 — Fast, moderate cost: Electronic health records (EHR), claims-based clinical data, credit-based mortality scores. EHR retrieval has gotten faster. MIB's electronic medical data product and vendors like Human API can return structured records in minutes rather than weeks. This tier is where most of the recent innovation has happened.

Tier 3 — Real-time biometric: Contactless vital signs screening via smartphone. Heart rate, heart rate variability, respiratory rate, blood oxygen. This is newer to the waterfall. The data is collected from the applicant during the application process itself, typically in under 60 seconds. It costs almost nothing per scan at scale.

Tier 4 — Slow, expensive: Attending physician statements (APS), paramedical exams, lab work. These are the evidence sources you're trying to avoid for most applicants. An APS can take 4-8 weeks and cost $50-200. A paramedical exam runs $100-150 and requires scheduling.

The waterfall logic is simple: pull Tier 1, evaluate. If sufficient, decide. If not, pull Tier 2, re-evaluate. Continue until you have enough information to classify the risk or until you've exhausted the automated sources and need to route to a human underwriter.

Why ordering matters more than most carriers think

The sequence isn't arbitrary. It drives three things simultaneously: applicant experience, per-policy cost, and mortality accuracy. Get the order wrong and you either spend too much on evidence, lose applicants to friction, or let bad risks slip through.

Swiss Re published an underwriting requirements optimization study that confirmed what many in the industry suspected: accelerated underwriting programs that carefully ordered their evidence pulls showed meaningful improvements in both cost-per-decision and cycle time compared to programs that pulled everything in parallel or used a legacy sequence.

The math is straightforward. If your Tier 1 sources can resolve 40% of applications with no further evidence needed, and your Tier 2 sources resolve another 35%, then only 25% of applicants ever need to cascade to Tier 3 or Tier 4. That's a fundamentally different cost structure than pulling everything on everyone.

But here's where it gets interesting. The percentage of applications resolved at each tier depends entirely on what data sources sit in that tier and how good your decision models are at using them. Moving EHR data from Tier 3 to Tier 2, or adding contactless biometrics to Tier 2, changes the resolution rates at every tier below.

| Waterfall position | Data source | Typical cost | Turnaround time | Resolution rate (approx.) |

|---|---|---|---|---|

| Tier 1 | Application + MIB + Rx + MVR | $15-30 bundle | Seconds | 35-45% of applicants |

| Tier 2 | EHR + clinical claims + biometrics | $20-50 per source | Minutes | 30-40% of remaining |

| Tier 3 | Contactless vitals scan | <$5 marginal | 30-60 seconds | 10-20% of remaining |

| Tier 4 | APS + paramedical + labs | $100-350 per applicant | Days to weeks | Remainder |

Those resolution rates are approximate and vary by carrier, product, face amount, and applicant demographics. But the pattern holds: each tier should resolve a meaningful chunk of the remaining pool, and the cost-per-resolution should stay proportional to the face amount at risk.

The EHR-first argument

Munich Re's research on leading with electronic health records has gotten a lot of attention, and for good reason. Their retrospective study looked at what happens when you reorder the waterfall to pull EHR data earlier in the sequence rather than treating it as a fallback.

The findings were pretty clear. When EHR data was positioned as the first medical evidence source (after basic identity and database checks), the underwriting decisions improved in accuracy compared to programs that relied on Rx-only or MIB-only at the front of the waterfall. The EHR data provided enough clinical context to either confirm the accelerated path or flag the case for additional review before expensive evidence was ordered.

MIB has been pushing a similar message with their electronic medical data product, arguing that structured EHR data designed for underwriting consumption reduces the need for traditional APS orders. Their pitch is that the data is already digital, already structured, and already sitting in health information exchanges. The underwriting industry just needs to pull it earlier in the process.

There's a practical wrinkle here. EHR hit rates vary by geography, age, and healthcare utilization. A 25-year-old applicant who hasn't seen a doctor in three years might not have meaningful EHR data to pull. A 55-year-old with a primary care physician and a specialist or two will have plenty. This means the waterfall can't be one-size-fits-all. The optimal ordering might differ based on what you already know about the applicant from Tier 1.

Where contactless biometrics fit in the waterfall

Contactless vital signs data from smartphone-based screening occupies a unique spot in the waterfall. Unlike EHR or Rx data, which reflect historical health status, a biometric scan captures the applicant's current physiological state. Heart rate, heart rate variability, respiratory rate, blood oxygen saturation. It's real-time data, not a record of past encounters.

This makes it useful in two places:

As a Tier 2 supplement, the biometric data can confirm or question what the historical records suggest. If Rx data shows no cardiovascular medications but the biometric scan picks up elevated resting heart rate or depressed HRV, that's a signal worth investigating before issuing on the accelerated path.

As a Tier 3 alternative to paramedical exams, for borderline cases that don't quite resolve at Tier 2, a contactless scan can provide enough additional signal to make a decision without ordering a full paramedical exam. The cost difference is substantial. A 30-second phone scan versus a $150 nurse visit.

The placement depends on the carrier's risk tolerance and their confidence in the biometric data. Some programs are running it alongside Tier 2 sources so every applicant gets scanned. Others position it as a Tier 3 triage tool, only triggered when the case doesn't resolve cleanly from database evidence alone.

Automotive and fleet applications

The waterfall concept isn't limited to life insurance. Companies like Circadify are applying similar data-ordering logic to driver monitoring and fleet health programs, where real-time biometric assessment replaces traditional screening sequences.

Building the decision logic between tiers

The waterfall itself is just a sequence. What makes it work is the decision engine that sits between each tier, evaluating whether the evidence collected so far is sufficient to classify the risk.

This is where things get complicated. The decision logic needs to account for:

- Face amount thresholds (higher amounts require more evidence)

- Applicant age (older applicants typically need more data points)

- Signal consistency across sources (do the data sources agree?)

- Specific red flags that mandate additional evidence regardless of tier (see our breakdown of knock-out criteria and triage rules)

- Regulatory requirements by state or jurisdiction

RGA's research on accelerated underwriting emphasized that the principal risk in these programs is mortality slippage, the gap between what accelerated decisions produce and what fully underwritten decisions would have produced. The waterfall's job is to minimize that gap while still being faster and cheaper than full underwriting.

The decision engine is also where machine learning enters the picture. Carriers are increasingly using predictive models at each tier boundary to estimate whether the current evidence is sufficient or whether the next tier is likely to change the decision. If the model is confident the decision won't change, skip the next tier. If it's uncertain, cascade down.

Common waterfall design mistakes

A few patterns keep showing up in programs that underperform:

Pulling everything in parallel. Some carriers, excited about the speed of digital data sources, pull all available evidence simultaneously rather than sequentially. This is fast, but it's expensive. You're paying for Tier 4 evidence on applicants who would have resolved at Tier 1. The waterfall exists specifically to avoid this.

Static ordering regardless of applicant profile. A 28-year-old applying for $250K term life does not need the same evidence cascade as a 58-year-old applying for $2M whole life. The waterfall should flex based on what Tier 1 data reveals about the applicant.

Ignoring EHR hit-rate variation. If your waterfall assumes EHR data will be available for every applicant, you'll have a fallback problem for the 20-30% where it's not. The waterfall needs a branch, not just a sequence.

Treating biometric data as decorative. Some programs collect contactless vitals but don't integrate them into the actual decision logic. The data gets stored but doesn't influence tier resolution. That's a wasted data pull.

No feedback loop. The waterfall should improve over time. If post-issue mortality studies show that cases resolved at Tier 2 are performing differently than expected, the tier boundaries and resolution criteria need adjustment.

Current research and evidence

The evidence base for waterfall optimization is growing, though it's still concentrated among a few large reinsurers and industry bodies.

Munich Re's EHR retro study, conducted in partnership with MIB, examined 525 life insurance applications across multiple carriers. The study used a data-stacking methodology where underwriters evaluated cases with progressively more evidence, measuring how decisions changed at each stage. The conclusion supported an EHR-first approach for most applicant profiles.

Swiss Re's underwriting requirements optimization whitepaper looked at the cost and accuracy tradeoffs of different evidence combinations. Their analysis confirmed that accelerated underwriting continues to offer paths to greater efficiency, but the specific ordering of requirements matters. A 0.45% mortality improvement was observed in optimized programs.

LIMRA's ongoing surveys of accelerated underwriting adoption show that 36% of companies now use accelerated underwriting for term life policies, up from much lower adoption rates five years ago. The companies with the most mature programs tend to be the ones that have iterated on their waterfall design multiple times.

The NAIC's Accelerated Underwriting Working Group has been examining the regulatory implications of these data source decisions, particularly around which alternative data sources are appropriate for use in insurance underwriting and what governance frameworks should apply.

The future of waterfall design

The next generation of digital health waterfalls will probably look less like a fixed sequence and more like a dynamic routing system. Instead of Tier 1 → Tier 2 → Tier 3 → Tier 4, the system evaluates the applicant profile at intake and selects the optimal evidence path for that specific case.

A young, healthy applicant with strong Rx and MIB signals might skip directly from Tier 1 to a contactless biometric confirmation and get issued in minutes. An older applicant with complex medical history might go straight from Tier 1 to EHR retrieval, bypassing the biometric tier entirely because the clinical records are more relevant.

This kind of adaptive waterfall requires better models at the routing layer. It also requires carriers to be comfortable with the idea that not every applicant follows the same evidence path. That's a cultural shift as much as a technical one.

The carriers who get this right will have a real structural advantage. Lower cost per policy issued, faster cycle times, better applicant completion rates, and, if the waterfall is well-calibrated, equivalent or better mortality outcomes than traditional underwriting.

Frequently asked questions

What is a digital health waterfall in underwriting?

A digital health waterfall is the ordered sequence in which an underwriting system pulls data sources on an insurance applicant. It starts with cheap, fast sources and cascades to more expensive, slower ones only when needed. The goal is to resolve as many applications as possible at the earliest and cheapest tier.

Why does the order of data sources matter?

The order determines cost, speed, and accuracy. Pulling expensive evidence on every applicant wastes money. Pulling insufficient evidence leads to mortality slippage. The waterfall balances these tradeoffs by resolving easy cases early and investing more evidence only in cases that need it.

Where do contactless vitals fit in the waterfall?

Contactless biometric data from smartphone scans typically sits in Tier 2 or Tier 3. Some carriers run it alongside EHR and claims data for every applicant. Others use it as a triage tool for borderline cases that don't resolve from database evidence alone. The placement depends on the carrier's confidence in the data and their program design.

How often should carriers revisit their waterfall design?

Regularly. Post-issue mortality studies, resolution-rate analysis, and cost-per-decision tracking should all feed back into waterfall optimization. Most mature programs revisit their evidence ordering at least annually, and some run continuous A/B tests on different waterfall configurations.