A/B Testing Digital vs Traditional Underwriting: What Carriers Learn

How life insurance carriers use A/B testing to compare digital and traditional underwriting, with real data on placement rates, mortality slippage, and cycle times.

Life insurance carriers have been talking about digital underwriting for years. But at some point, talk has to become measurement. The most sophisticated carriers aren't just adopting accelerated or automated underwriting pathways and hoping for the best. They're running controlled comparisons between their digital and traditional workflows, tracking everything from placement rates and cycle times to mortality experience over multi-year windows. The results aren't always what the digital evangelists predict, and that's precisely what makes these tests valuable.

According to Gen Re's 2025 U.S. Individual Life Next Gen Underwriting Survey, 59% of individual life applications now qualify for an accelerated underwriting path, with 12% eligible for fully automated decisioning and 41% still processed through traditional channels.

Why Carriers Run Parallel Underwriting Paths

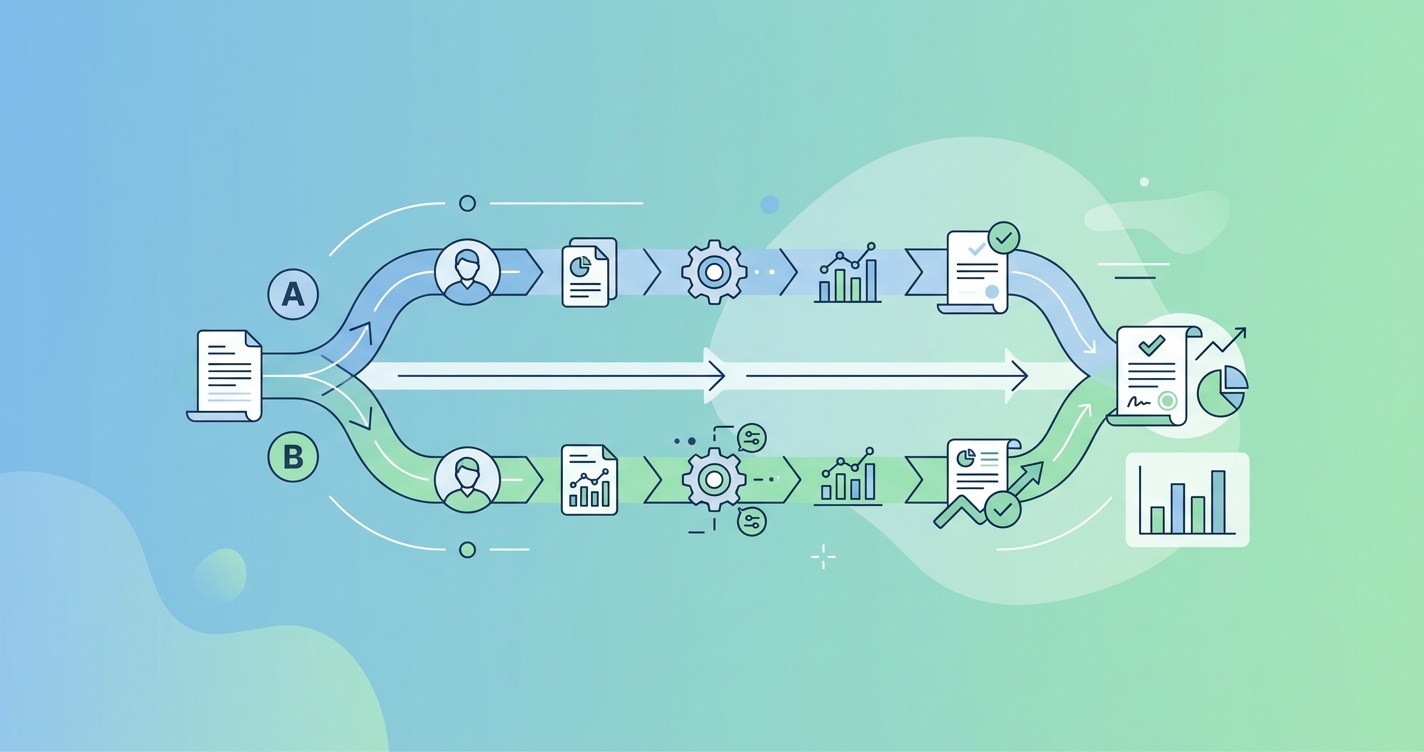

The core logic behind A/B testing in underwriting is straightforward: you can't know what you're gaining or losing from a new workflow unless you measure it against what it replaced. Most carriers running these comparisons route a percentage of eligible applications through their accelerated or digital pathway while keeping a control group in the traditional, fully underwritten channel. The comparison produces data on three things that matter: how many applicants actually complete the process, how quickly policies get issued, and whether the digital cohort develops worse mortality experience over time.

This isn't theoretical. Gen Re's 2024 U.S. Individual Life Accelerated Underwriting Survey found that carriers with the highest acceleration rates reported lower placement rates, while carriers with lower acceleration rates saw higher placement rates. That tradeoff is real, and it only becomes visible when you're tracking both pathways side by side. Munich Re's own survey data on accelerated underwriting trends confirmed a similar finding: there's a strong correlation between acceleration rate and the resulting offer and placement rates, and that correlation isn't always in the direction carriers expect.

The reason for the tradeoff is intuitive once you see it, and it connects directly to how carriers measure accelerated underwriting ROI. Digital underwriting pathways that cast a wide net and accelerate a high percentage of applicants are inevitably letting through some cases that would have been rated, postponed, or declined under traditional review. The question isn't whether this happens. The question is how much it costs in mortality slippage, and whether the operational savings and placement gains justify that cost.

What the Data Actually Shows

The Society of Actuaries published a mortality slippage study in August 2024, authored by Lisa Seeman and Katy Herzog, that lays out the current state of the evidence. The key finding: mortality slippage estimates for accelerated underwriting programs range between 6% and 15%, depending on the carrier's eligibility criteria, the data sources used in the digital pathway, and how long the program has been running.

That range is wide enough to matter. A carrier seeing 6% slippage on its accelerated book can probably justify the program on operational savings alone. A carrier at 15% might need to rethink its eligibility rules or add data sources.

Placement and Cycle Time Comparison

| Metric | Traditional Underwriting | Accelerated/Digital Underwriting | Difference |

|---|---|---|---|

| Median cycle time (application to issue) | 20-30 days | 3-7 days | 75-85% reduction |

| Placement rate (policies placed vs. applied) | Higher per-case | Lower per-case, higher volume | Varies by acceleration rate |

| Not-taken rate | Lower (applicants already invested) | Higher (faster process, less commitment) | 5-15% gap reported |

| Mortality slippage vs. expected | Baseline | 6-15% above baseline | SOA 2024 study range |

| Data sources used | Paramedical exam, labs, APS | MIB, Rx, MVR, EHR, credit | Fluid vs. data-driven |

The cycle time difference is where digital underwriting makes its most obvious case. Moving from a 25-day average to a 5-day average changes the applicant experience in ways that affect not-taken rates, distributor satisfaction, and competitive positioning. LIMRA has documented for years that longer cycle times correlate with higher not-taken rates, and anything that compresses the timeline tends to improve placement volume even if the per-case placement rate drops.

The Mortality Slippage Question

Mortality slippage is the metric that keeps chief underwriting officers up at night. If you remove paramedical exams and lab tests from the underwriting process, you're removing the most objective health data available. The replacement data sources, including prescription history, motor vehicle records, MIB hits, and increasingly electronic health records, are powerful but not perfect substitutes.

Munich Re's research on digital health records found that electronic health records reduced insurance risk assessment costs by 35% across a study of 525 life insurance applications. But cost reduction and risk accuracy aren't the same thing. The question carriers need to answer through their A/B tests is whether the digital data sources produce equivalent risk classification, not just whether they're cheaper.

Gen Re's 2025 survey found that the top goals for accelerated underwriting workflows were reducing time to issue (52% of respondents), managing mortality slippage (45%), and increasing sales (41%). That mortality slippage management sits at number two tells you something about where the industry's anxiety sits. Carriers aren't just trying to go fast. They're trying to go fast without blowing up their mortality assumptions.

The SOA study recommended several monitoring best practices for carriers running accelerated programs. These included tracking mortality experience by underwriting pathway over rolling multi-year windows, comparing actual-to-expected ratios between accelerated and traditional cohorts, and running regular reviews of cases that would have been rated or declined under traditional rules but were issued standard through the accelerated path.

How Carriers Structure Their Tests

Not every carrier calls what they're doing "A/B testing" in the formal statistical sense. Some run true randomized parallel paths where eligible applications are randomly assigned to digital or traditional review. Others take a cohort approach, running the digital pathway for a defined period and comparing outcomes against historical traditional-underwriting baselines. Both approaches have limitations.

The randomized approach produces cleaner data but creates operational complexity. You need underwriting staff trained in both pathways, and you need to explain to distributors why two similar applicants might have wildly different experiences. The cohort approach is operationally simpler but introduces time-period confounding: market conditions, product mix, and distribution channels change between periods, making it harder to isolate the effect of the underwriting pathway itself.

Common Test Structures

| Approach | How It Works | Strengths | Weaknesses |

|---|---|---|---|

| Randomized parallel path | Eligible apps randomly assigned to digital or traditional | Clean causal inference | Operationally complex, distributor confusion |

| Historical cohort comparison | Digital pathway launched; compared to prior-period traditional data | Simple to implement | Time-period confounding, changing mix |

| Shadow scoring | All apps go through traditional review; digital pathway runs in parallel without affecting decisions | No risk to book of business | No real-world behavioral data (applicant experience unchanged) |

| Phased rollout by state/channel | Digital pathway launched in select states or distribution channels first | Real-world data with limited exposure | Geographic and channel selection bias |

Shadow scoring deserves special mention because several large carriers have used it as a first step before committing to a live digital pathway. In a shadow scoring model, every application goes through traditional underwriting, but the digital pathway's algorithms also evaluate each case. The carrier can then compare what the digital pathway would have decided against what the human underwriter actually decided, without any risk to the actual book of business.

RGA has promoted digital health data scoring solutions that work in exactly this way. Their service evaluates structured electronic medical record and claims data and provides an underwriting risk score that carriers can test against their existing decisions before going live. This kind of pre-launch validation is becoming standard practice for carriers that want to manage their boards' risk appetite while still moving toward digital workflows.

What Carriers Learn That They Don't Expect

The most interesting findings from carrier A/B tests often aren't the headline metrics. A few patterns show up repeatedly.

First, the applicant population changes. When you offer a faster, simpler process, you attract applicants who wouldn't have applied under the traditional model. Some of these are good risks who were deterred by the inconvenience of paramedical exams. Some are risks who prefer not to have their health examined too closely. Disentangling these two groups is one of the harder analytical challenges in evaluating digital underwriting programs.

Second, distributor behavior shifts. Agents and brokers who know about the accelerated pathway start steering cases toward it, sometimes overriding the carrier's intended eligibility criteria by coaching applicants on how to qualify. This gaming effect can distort the test results if it isn't accounted for.

Third, the not-taken rate story is more complicated than "faster is better." Some carriers have found that very fast digital issuance actually increases not-taken rates for certain products, because the speed removes the cooling-off period during which applicants talk themselves into the purchase. The relationship between speed and placement isn't linear, and it varies by product type, face amount, and distribution channel.

Connecting Digital Underwriting to Better Health Data

The next frontier in carrier A/B testing is incorporating richer biometric data into the digital pathway. Current accelerated programs rely heavily on prescription history, credit data, MIB checks, and motor vehicle records. These are strong predictors of some risks but weak on others, particularly cardiovascular health, metabolic conditions, and lifestyle factors that paramedical exams historically caught.

Technologies like remote photoplethysmography are opening the door to a middle ground: objective biometric data captured through a smartphone camera, without the cost or friction of a traditional paramedical exam. Companies like Circadify are developing contactless vital signs screening that could slot into the digital underwriting pathway as an additional data source, potentially closing some of the mortality slippage gap without reintroducing the cycle time delays that make traditional underwriting unpopular with applicants and distributors.

For carriers currently running or planning A/B tests between digital and traditional underwriting, adding biometric data sources to the digital arm creates a natural extension of the experiment. Instead of a binary comparison between "digital without health data" and "traditional with health data," carriers can test a third pathway: digital with contactless biometric screening. The question is whether that middle path delivers the speed benefits of digital underwriting with the risk classification accuracy closer to what traditional underwriting achieves.

Current Research and Evidence

The evidence base for digital underwriting comparison studies is growing, though much of the carrier-level data remains proprietary.

Gen Re has published the most comprehensive public survey data. Their annual (now "Next Gen Underwriting") surveys have tracked the evolution of accelerated programs across the U.S. individual life market since the mid-2010s. The 2025 survey is the most current, covering 2024 application data and showing that the majority of carriers now operate some form of accelerated pathway. Gen Re's data on throughput rates, acceleration rates, and offer rates provides the best publicly available benchmark for carriers measuring their own programs.

The Society of Actuaries' 2024 mortality slippage study by Seeman and Herzog represents the most rigorous published analysis of mortality experience differences between accelerated and traditional underwriting cohorts. The study's 6-15% slippage range has become a widely cited benchmark in the industry.

Munich Re's 2024 accelerated underwriting survey added granularity on the relationship between acceleration rates and placement rates, and their research on EHR utilization, which relates to how digital health data integrates with existing checks, has documented the growing role of electronic health records as a risk assessment data source in digital programs.

LIMRA's ongoing research on placement rates and not-taken rates provides the demographic and behavioral context for understanding how faster underwriting processes affect the applicant funnel, even if their data isn't specifically structured as an A/B comparison.

The Future of Underwriting Experimentation

The carriers that will benefit most from A/B testing aren't the ones running a single test and declaring victory. They're the ones building experimentation into their ongoing operations. As data sources evolve, as applicant expectations change, and as competitive pressure forces faster cycle times, the optimal underwriting workflow is a moving target.

The shift toward what Gen Re now calls "Next Gen Underwriting" reflects this reality. It's no longer about whether to adopt digital underwriting. It's about continuous optimization across a spectrum of automation, data enrichment, and human review. Carriers that treat their underwriting pathways as permanent experiments, adjusting eligibility criteria, data sources, and decision thresholds based on ongoing measurement, will outperform carriers that pick a model and stick with it.

Frequently Asked Questions

What is mortality slippage in accelerated underwriting?

Mortality slippage is the difference in mortality experience between applicants underwritten through an accelerated or digital pathway and those underwritten through traditional methods. The SOA's 2024 study by Seeman and Herzog estimated that accelerated programs experience 6-15% higher mortality than expected, depending on program design and data sources used. It measures the cost of removing traditional health verification from the underwriting process.

How long does it take to measure underwriting A/B test results?

Mortality experience takes years to develop meaningful credibility. Most actuaries recommend a minimum of 3-5 years of policy duration data before drawing conclusions about mortality differences between underwriting pathways. Operational metrics like cycle time, placement rate, and not-taken rate can be measured within months. This timing mismatch is one of the fundamental challenges of underwriting experimentation.

Do accelerated underwriting programs always have worse mortality?

Not necessarily. Programs with conservative eligibility criteria, strong data sources, and appropriate face amount limits can achieve mortality experience close to traditional underwriting. Gen Re's surveys show wide variation across carriers. The programs with the worst slippage tend to be those that accelerate a high percentage of applications without sufficient compensating data. Programs that combine multiple digital data sources, like Rx, MIB, MVR, and EHR, generally perform better than those relying on fewer inputs.

What role do electronic health records play in digital underwriting?

EHRs are becoming one of the most important data sources for closing the gap between digital and traditional underwriting. Munich Re's research found that EHRs reduced risk assessment costs by 35%, and their 2024 survey data showed increasing carrier adoption. EHRs provide structured clinical data that's closer to what a paramedical exam captures than other digital data sources like prescription history or credit data. The challenge is inconsistent availability and format standardization across health systems.